目前分類:python (15)

- Feb 18 Tue 2020 16:24

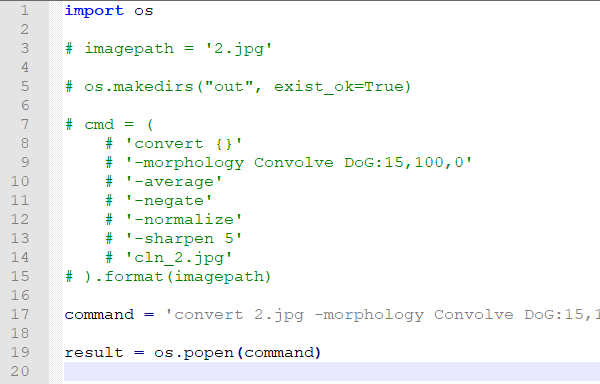

python執行cmd指令

- Feb 18 Tue 2020 10:37

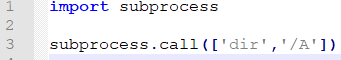

Python FileNotFoundError: [WinError 2] 系统找不到指定的文件

- Feb 16 Sun 2020 18:53

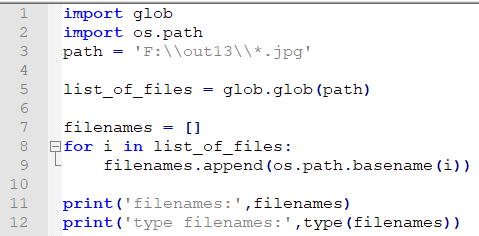

取路徑下所有檔案檔名

- Feb 11 Tue 2020 14:10

關於python import path

- Dec 25 Wed 2019 10:53

fuzzysearch in python

from fuzzysearch import find_near_matches

- Nov 26 Tue 2019 20:48

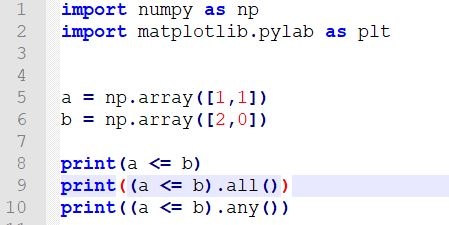

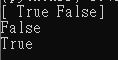

numpy array 比對大小與等於

- Jun 10 Mon 2019 14:45

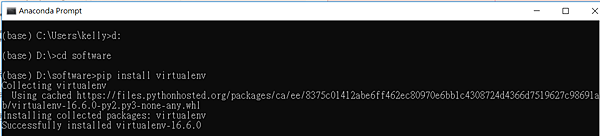

virtualenv

- Jun 08 Sat 2019 15:09

virtualenv && flask架網站

- Nov 30 Fri 2018 16:46

Logs

# Youtube video tutorial: https://www.youtube.com/channel/UCdyjiB5H8Pu7aDTNVXTTpcg # Youku video tutorial: http://i.youku.com/pythontutorial

- Nov 30 Fri 2018 16:44

TensorBoard(二)

import tensorflow as tf import numpy as np # 定義一個添加層的函數 def add_layer(inputs, input_tensors, output_tensors, activation_function=None): with tf.name_scope('Layer'): with tf.name_scope('Weights'): W = tf.Variable(tf.random_normal([input_tensors, output_tensors])) with tf.name_scope('Biases'): b = tf.Variable(tf.zeros([1, output_tensors])) with tf.name_scope('Formula'): formula = tf.add(tf.matmul(inputs, W), b) if activation_function is None: outputs = formula else: outputs = activation_function(formula) return outputs # 準備資料 x_data = np.random.rand(100) x_data = x_data.reshape(len(x_data), 1) y_data = x_data * 0.1 + 0.3 # 建立 Feeds with tf.name_scope('Inputs'): x_feeds = tf.placeholder(tf.float32, shape=[None, 1]) y_feeds = tf.placeholder(tf.float32, shape=[None, 1]) # 添加 1 個隱藏層 hidden_layer = add_layer(x_feeds, input_tensors=1, output_tensors=10, activation_function=None) # 添加 1 個輸出層 output_layer = add_layer(hidden_layer, input_tensors=10, output_tensors=1, activation_function=None) # 定義 `loss` 與要使用的 Optimizer with tf.name_scope('Loss'): loss = tf.reduce_mean(tf.square(y_feeds - output_layer)) with tf.name_scope('Train'): optimizer = tf.train.GradientDescentOptimizer(learning_rate=0.01) train = optimizer.minimize(loss) # 初始化 Graph init = tf.global_variables_initializer() sess = tf.Session() # 將視覺化輸出 writer = tf.summary.FileWriter("TensorBoard/", sess.graph) # 開始運算 sess.run(init) for step in range(201): sess.run(train, feed_dict={x_feeds: x_data, y_feeds: y_data}) # if step % 20 == 0: # print(sess.run(loss, feed_dict = {x_feeds: x_data, y_feeds: y_data})) sess.close() # 打開cmd,cd到tensorflow資料夾前一目錄 # $tensorboard --logdir=TensorBoard

import tensorflow as tf

import numpy as np

# 定義一個添加層的函數

def add_layer(inputs, input_tensors, output_tensors, activation_function=None):

with tf.name_scope('Layer'):

with tf.name_scope('Weights'):

W = tf.Variable(tf.random_normal([input_tensors, output_tensors]))

with tf.name_scope('Biases'):

b = tf.Variable(tf.zeros([1, output_tensors]))

with tf.name_scope('Formula'):

formula = tf.add(tf.matmul(inputs, W), b)

if activation_function is None:

outputs = formula

else:

outputs = activation_function(formula)

return outputs

# 準備資料

x_data = np.random.rand(100)

x_data = x_data.reshape(len(x_data), 1)

y_data = x_data * 0.1 + 0.3

# 建立 Feeds

with tf.name_scope('Inputs'):

x_feeds = tf.placeholder(tf.float32, shape=[None, 1])

y_feeds = tf.placeholder(tf.float32, shape=[None, 1])

# 添加 1 個隱藏層

hidden_layer = add_layer(x_feeds, input_tensors=1, output_tensors=10, activation_function=None)

# 添加 1 個輸出層

output_layer = add_layer(hidden_layer, input_tensors=10, output_tensors=1, activation_function=None)

# 定義 `loss` 與要使用的 Optimizer

with tf.name_scope('Loss'):

loss = tf.reduce_mean(tf.square(y_feeds - output_layer))

with tf.name_scope('Train'):

optimizer = tf.train.GradientDescentOptimizer(learning_rate=0.01)

train = optimizer.minimize(loss)

# 初始化 Graph

init = tf.global_variables_initializer()

sess = tf.Session()

# 將視覺化輸出

writer = tf.summary.FileWriter("TensorBoard/", sess.graph)

# 開始運算

sess.run(init)

for step in range(201):

sess.run(train, feed_dict={x_feeds: x_data, y_feeds: y_data})

# if step % 20 == 0:

# print(sess.run(loss, feed_dict = {x_feeds: x_data, y_feeds: y_data}))

sess.close()

# 打開cmd,cd到tensorflow資料夾前一目錄

# $tensorboard --logdir=TensorBoard

- Nov 30 Fri 2018 16:42

tensorboard (一)

''' 為了克服python基本運算緩慢的問題 (因為 tensorflow 使用 GPU、多工 例:A+B用TF算比C慢) tensorflow 採用預先建構完整的運算式 再一起進行運算的方式 ''' import tensorflow as tf # none 為任意數目,784 為每個樣本所含總pixel,placeholder 為預先空出喂資料的空間 x = tf.placeholder(tf.float32, [None, 784]) # input (mnist images) # variable 在計算過程中可更改 W = tf.Variable(tf.zeros[784, 10]) # weight ,10表示樣本各pixel在類別1~10的分別機率 b = tf.Variable(tf.zeros[10]) # bias # softmax 在最後輸出前,整理神經網路結果(wx+b),使結果都介於 0~1 且相加為1 y = tf.nn.softmax(tf.matmul(x, W)+b) # 訓練 # Loss(/Cost) 為目前訓練模型與實際模型的差,用來判斷模型好壞 # 使用 cross-entropy 來決定 loss function y_ = tf.placeholder(tf.float32, [None, 10]) # 實際答案 cross_entropy = tf.reduce_mean()

'''

為了克服python基本運算緩慢的問題

(因為 tensorflow 使用 GPU、多工 例:A+B用TF算比C慢)

tensorflow 採用預先建構完整的運算式

再一起進行運算的方式

'''

import tensorflow as tf

# none 為任意數目,784 為每個樣本所含總pixel,placeholder 為預先空出喂資料的空間

x = tf.placeholder(tf.float32, [None, 784]) # input (mnist images)

# variable 在計算過程中可更改

W = tf.Variable(tf.zeros[784, 10]) # weight ,10表示樣本各pixel在類別1~10的分別機率

b = tf.Variable(tf.zeros[10]) # bias

# softmax 在最後輸出前,整理神經網路結果(wx+b),使結果都介於 0~1 且相加為1

y = tf.nn.softmax(tf.matmul(x, W)+b)

# 訓練

# Loss(/Cost) 為目前訓練模型與實際模型的差,用來判斷模型好壞

# 使用 cross-entropy 來決定 loss function

y_ = tf.placeholder(tf.float32, [None, 10]) # 實際答案

cross_entropy = tf.reduce_mean()

- Nov 30 Fri 2018 16:37

利用筆電鏡頭擷取frame

import cv2 # 使用視訊鏡頭擷取影像 cv2.namedWindow("preview") vc = cv2.VideoCapture(0) if vc.isOpened(): # try to get the first frame rval, frame = vc.read() else: rval = False count = 0 while rval: cv2.imshow("preview", frame) # 產生視窗 rval, frame = vc.read() cv2.imwrite("frame%d.jpg" % count, frame) # 將影格轉為 frame key = cv2.waitKey(100) # 調整影格數 if key == 27: # 按esc停止 break count += 1 cv2.destroyWindow("preview")

import cv2

# 使用視訊鏡頭擷取影像

cv2.namedWindow("preview")

vc = cv2.VideoCapture(0)

if vc.isOpened(): # try to get the first frame

rval, frame = vc.read()

else:

rval = False

count = 0

while rval:

cv2.imshow("preview", frame) # 產生視窗

rval, frame = vc.read()

cv2.imwrite("frame%d.jpg" % count, frame) # 將影格轉為 frame

key = cv2.waitKey(100) # 調整影格數

if key == 27: # 按esc停止

break

count += 1

cv2.destroyWindow("preview")

- Nov 30 Fri 2018 16:15

MINIST練習

import tensorflow as tf

from tensorflow.examples.tutorials.mnist import input_data

INPUT_NODE = 784

OUTPUT_NODE = 10

LAYER1_NODE = 500

BATCH_SIZE = 100

LEARNING_RATE_BASE = 0.8

LEARNING_RATE_DECAY = 0.99

REGULARIZATION_RATE = 0.0001

TRAINING_STEPS = 30000

MOVING_AVERAGE_DECAY = 0.99

def inference(input_tensor, avg_class, weights1,

biases1, weights2, biases2):

if avg_class is None:

layer1 = tf.nn.relu(tf.matmul(input_tensor, weights1) + biases1)

return tf.matmul(layer1, weights2) + biases2

else:

layer1 = tf.nn.relu(

tf.matmul(input_tensor, avg_class.average(weights1) + avg_class.average(biases1)))

return tf.matmul(layer1, avg_class.average(weights2) + avg_class.average(biases2))

# 訓練模型的過程

def train(mnist):

x = tf.placeholder(tf.float32, [None, INPUT_NODE], name='x-input')

y_ = tf.placeholder(tf.float32, [INPUT_NODE, OUTPUT_NODE], name='y-input')

# 生成隱藏層參數

weights1 = tf.Variable(

tf.truncated_normal([INPUT_NODE, LAYER1_NODE], stddev=0.1))

biases1 = tf.Variable(tf.constant(0.1, shape=[LAYER1_NODE]))

# 生成輸出層參數

weights2 = tf.Variable(

tf.truncated_normal([LAYER1_NODE, OUTPUT_NODE], stddev=0.1))

biases2 = tf.Variable(tf.constant(0.1, shape=[OUTPUT_NODE]))

y = inference(x, None, weights1, biases1, weights2, biases2)

global_step = tf.Variable(0, trainable=False)

variable_averages = tf.train.ExponentialMovingAverage(

MOVING_AVERAGE_DECAY, global_step)

variables_averages_op = variable_averages.apply(

tf.trainable_variables())

average_y = inference(

x, variable_averages, weights1, biases1, weights2, biases2)

cross_entropy = tf.nn.sparse_softmax_cross_entropy_with_logits(y, tf.argmax(y_, 1))

cross_entropy_mean = tf.reduce_mean(cross_entropy)

regularizer = tf.contrib.layers.l2_trgularizer(REGULARIZATION_RATE)

regularization = regularizer(weights1) + regularizer(weights2)

loss = cross_entropy_mean + regularization

learning_rate = tf.train.exponential_delay(

LEARNING_RATE_BASE, global_step, mnist.train.num_examples / BATCH_SIZE,

LEARNING_RATE_DECAY)

train_step = tf.train.GradientDescentOptimizer(learning_rate).minimize(loss, global_step=global_step)

with tf.control_dependencies([train_step, variables_averages_op]):

train_op = tf.no_op(name='train')

correct_prediction = tf.equal(tf.argmax(average_y, 1), tf.argmax(y_, 1))

accuracy = tf.reduce_mean(tf.cast(correct_prediction, tf.float32)) # 模型在這組數劇上的正確性

with tf.Session() as sess:

tf.global_variables_initializer().run()

validate_feed = {x: mnist.validation.images,

y_: mnist.validation. labels}

test_feed = {x: mnist.test.images, y_: mnist.test.labels}

for i in range(TRAINING_STEPS):

if i % 1000 == 0:

validate_acc = sess.run(accuracy, feed_dict=validate_feed)

print("After %d training step(s), validation accuracy"

"using average model is %g " % (i, validate_acc))

xs, ys = mnist.train.next_batch(BATCH_SIZE)

sess.run(train_op, feed_dict={x: xs, y_: ys}) # 運行訓練過程

test_acc = sess.run(accuracy, feed_dict=test_feed)

print("After %d training step(s), test accuracy using average "

"model is %g " % (TRAINING_STEPS, test_acc))

# 主程序入口

def main(argv=None):

mnist = input_data.read_data_sets("/tmp/data", one_hot=True)

train(mnist)

if __name__ == '__main__':

tf.app.run()

- Nov 30 Fri 2018 15:47

python用matplotlib畫二維圖& 貼code在blog的方法

import matplotlib.pyplot as plt

import numpy as np

# 畫一個 0~180 度的 sin 波

x = np.arange(0, 180)

y = np.sin(x*np.pi/180.0)

plt.plot(x, y)

# 設定圖的範圍

plt.xlim(-30, 390)

plt.ylim(-1.5, 1.5)

plt.xlabel("x-axis")

plt.ylabel("y-axis")

plt.title("The title")

plt.show()

- Apr 22 Sun 2018 13:44

搞懂for loop中的global variable - python